Lovalingo vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

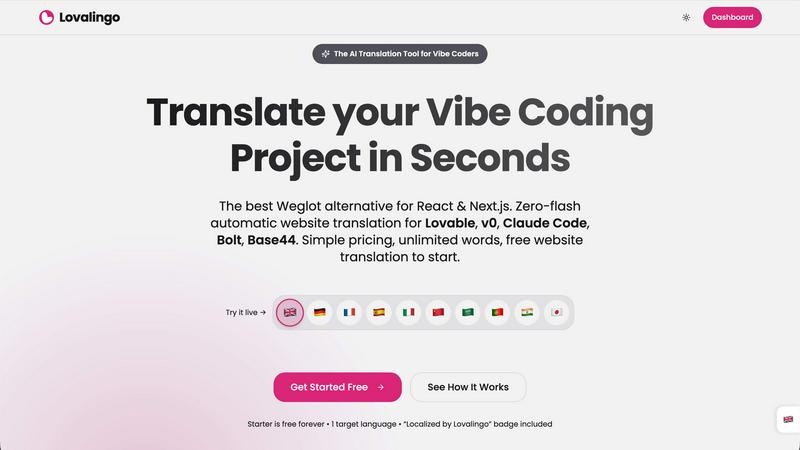

Lovalingo

Discover how Lovalingo instantly translates and indexes your React apps with zero flash.

Last updated: February 28, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

Lovalingo

OpenMark AI

Overview

About Lovalingo

What if you could build for a global audience from day one, without ever touching a translation file? Lovalingo is an AI-powered translation engine designed specifically for the modern, fast-paced world of vibe coding. It completely reimagines internationalization (i18n) for developers using AI tools like Lovable, v0, Claude Code, Bolt, and Base44. Instead of the traditional, cumbersome process of managing JSON strings and manual entries, Lovalingo automates everything. It integrates natively into your React or Next.js application's render cycle, detecting and translating content in real-time. This means you can ship features at the speed of AI, and your app automatically scales to support over 20 languages instantly. It's built for SaaS founders eyeing international markets, agencies delivering client projects rapidly, and any developer who believes their time is better spent building features than managing translation spreadsheets. The core promise is simple: eliminate the maintenance headache of i18n and unlock seamless global growth.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.