Crawlkit vs Fallom

Side-by-side comparison to help you choose the right AI tool.

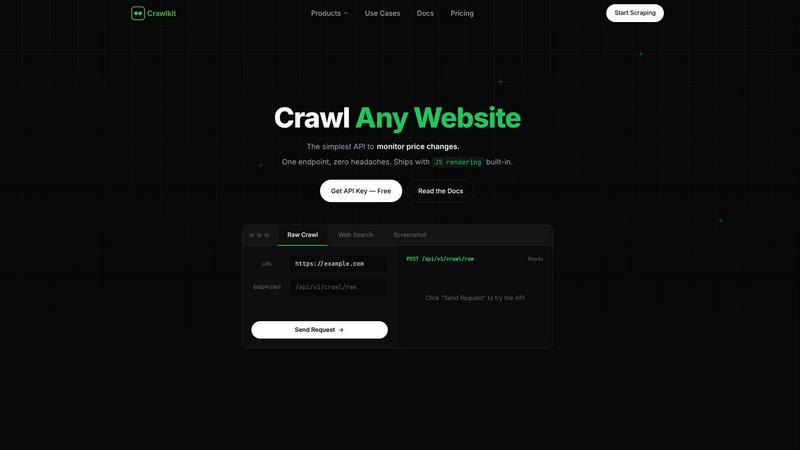

Crawlkit

Crawlkit turns any website into structured data with a single, simple API call.

Last updated: February 28, 2026

Fallom offers real-time observability for your AI agents, providing complete visibility and cost tracking.

Last updated: February 28, 2026

Visual Comparison

Crawlkit

Fallom

Feature Comparison

Crawlkit

Unified API for Diverse Sources

Crawlkit consolidates access to a wide array of data sources under one cohesive API. Whether you're curious about a company's details on LinkedIn, an influencer's metrics on Instagram, app reviews on the Play Store, or real-time web search results, you use the same simple interface. This eliminates the need to learn and maintain multiple scraping tools or adapt to different website structures, allowing you to satisfy your data curiosity across the entire digital landscape with consistent, predictable calls.

Infrastructure Abstraction

The platform expertly handles the most challenging aspects of modern web scraping behind the scenes. This includes managing rotating proxy pools to avoid IP blocks, executing JavaScript in headless browsers to render dynamic content, respecting rate limits, and parsing platform-specific HTML into clean JSON. This feature satisfies a deep curiosity: what if you could access the data without ever worrying about how it's fetched? Crawlkit makes this a reality, letting you explore data collection at scale without operational overhead.

Reliable & Complete Data Delivery

Crawlkit is engineered for reliability, ensuring you receive complete, usable data every time. It patiently waits for full page loads on complex single-page applications (SPAs) and validates all responses before delivery. This means you never have to wonder if a missing data field is due to a parsing error or a failed load. The guarantee of structured, complete outputs allows you to build robust systems and analyses with confidence, turning sporadic data glimpses into a steady stream of insight.

Transparent, Flexible Pricing

Curious about costs? Crawlkit employs a straightforward credit-based system where each API endpoint has a fixed cost, and you only pay for successful requests (with refunds on failures). There are no monthly commitments, no rate limits, and purchased credits never expire. This model encourages experimentation and scales with you, offering volume discounts for larger needs. It answers the fundamental question of value, providing clear, predictable pricing without hidden fees or punitive scaling.

Fallom

End-to-End LLM Tracing

Dive deep into the complete lifecycle of every AI interaction. Fallom automatically captures and visualizes the entire chain of events, from the initial user prompt through each sequential LLM call, tool invocation, and final response. You can explore crucial details like the exact inputs and outputs, token consumption, latency breakdowns, and the associated cost for each step. This granular, waterfall-style visibility is fundamental for understanding agent behavior, identifying bottlenecks, and ensuring the quality of complex, multi-step workflows.

Granular Cost Attribution & Analytics

Ever wondered exactly which model, team, or customer is driving your AI spend? Fallom brings complete financial transparency to your LLM operations. It automatically attributes costs down to the individual call level, allowing you to break down expenses by model provider, specific user, internal team, or even end customer. This enables precise budgeting, accurate chargebacks, and data-driven decisions about model selection, helping you optimize for both performance and cost-efficiency without any financial blind spots.

Enterprise Compliance & Audit Trails

Navigate the evolving landscape of AI regulation with built-in confidence. Fallom is engineered for regulated industries, providing immutable, comprehensive audit trails of all AI interactions. This includes full input/output logging, model version tracking, and user consent recording—features essential for meeting standards like GDPR, SOC 2, and the EU AI Act. Its configurable privacy modes also allow you to redact sensitive data or log only metadata, ensuring compliance without sacrificing essential observability.

Real-Time Dashboard & Live Monitoring

Watch your AI systems operate in real-time with a dynamic, interactive dashboard. See live traces stream in, monitor overall system health, and spot anomalies in usage patterns, latency, or error rates as they happen. This immediate visibility allows teams to proactively identify and troubleshoot issues before they impact users, turning reactive firefighting into proactive system management and ensuring high reliability for your AI-powered applications.

Use Cases

Crawlkit

Competitive Intelligence and Market Research

Imagine automatically tracking your competitors' digital footprints. With Crawlkit, you can systematically monitor their LinkedIn company pages for employee growth and job postings, scrape their app store listings for feature updates and user sentiment, or analyze their public Instagram engagement. This continuous stream of structured data fuels dynamic dashboards and reports, satisfying a deep curiosity about market position and revealing strategic opportunities without manual, repetitive research.

Lead Generation and CRM Enrichment

Sales and marketing teams can transform raw lead lists into rich profiles. By feeding website URLs or company names into Crawlkit's LinkedIn API, you can automatically pull job titles, company information, professional summaries, and other key details to enrich contact records in your CRM. This process turns a simple list of names into a deep, actionable understanding of your potential customers, enabling highly personalized outreach and improving sales conversion rates.

Social Media Monitoring and Growth Tracking

For brands, agencies, or influencers, understanding social performance is key. Crawlkit allows you to programmatically track key Instagram metrics—like follower counts, post engagement rates, and content themes—for your own account or competitors'. Schedule weekly scripts to pull this data and visualize growth trends over time. This use case satisfies the curiosity of what content resonates most and provides data-driven insights to inform your social media strategy.

Product Development and Review Analysis

App developers and product managers can harness direct user feedback at scale. Use Crawlkit to pull all reviews from the Google Play Store or Apple App Store for your app or competing products. Analyze this structured data to uncover common pain points, feature requests, and sentiment trends. This turns the chaotic noise of user reviews into a clear signal, guiding your product roadmap and helping you understand exactly what users love or wish was different.

Fallom

Debugging Complex AI Agent Workflows

When a customer-facing agent fails to book a flight correctly, traditional logging offers only fragments of the story. Fallom allows developers to replay the entire agent session, examining the exact prompts, the data returned from each tool call (like flight search APIs), and the LLM's reasoning at each step. This complete context transforms debugging from a guessing game into a precise, efficient process, dramatically reducing mean time to resolution for intricate AI issues.

Implementing Transparent AI Cost Management

For a SaaS company embedding AI features, uncontrolled costs can quickly derail profitability. Fallom enables finance and engineering leaders to see precisely how much each product feature, customer segment, or internal project is spending on AI. This allows for accurate showback/chargeback models, informed decisions on pricing tiers, and identification of optimization opportunities, such as switching to a more cost-effective model for certain tasks without degrading user experience.

Ensuring Regulatory Compliance for AI Deployments

A healthcare or financial services firm deploying AI assistants must demonstrate strict adherence to data privacy and operational transparency regulations. Fallom provides the verifiable audit trail required, logging every interaction with user context, model versions used, and data processed. Its privacy controls ensure sensitive information can be protected, giving compliance officers the evidence needed to pass audits and build trust with users and regulators.

Optimizing Model Performance & A/B Testing

Choosing the right LLM is critical for application quality and cost. Fallom facilitates robust A/B testing by allowing teams to safely split traffic between different models or prompt versions. You can then compare their performance in real-time across key metrics like accuracy, latency, and cost per call directly within the platform. This data-driven approach takes the guesswork out of model selection and prompt engineering, ensuring you confidently deploy the best-performing configuration.

Overview

About Crawlkit

Ever wondered how to turn the vast, unstructured data of the web into a clean, reliable stream of information for your projects? Crawlkit is the answer. It's a developer-first web data extraction platform that transforms the complex, often frustrating task of web scraping into a simple API call. Imagine needing data from LinkedIn for sales intelligence, Instagram for social listening, or app stores for market analysis. Instead of wrestling with headless browsers, proxy rotations, and anti-bot countermeasures, Crawlkit abstracts all that infrastructure away. It provides a single, powerful interface to extract structured data from virtually any website or platform, including search engines, social media, and app stores. Built for developers and data teams, its core value proposition is profound simplicity and reliability. You focus entirely on leveraging the data—building applications, enriching CRMs, or training models—while Crawlkit handles the intricate mechanics of collection, parsing, and delivery. With transparent, pay-as-you-go pricing and credits that never expire, it invites you to start small, experiment freely, and scale your data pipelines without lock-in or surprise commitments.

About Fallom

What if you could peer inside the intricate conversations of your AI agents, understanding not just their final answers but the entire journey of thought, tool use, and decision-making? Fallom is the key to that exploration. It is a cutting-edge, AI-native observability platform built from the ground up for the unique complexities of Large Language Model (LLM) and autonomous agent workloads. Designed for engineering teams and organizations scaling their AI applications, Fallom provides a comprehensive, real-time window into every AI interaction happening in production. Its core value lies in transforming opaque AI operations into transparent, analyzable, and optimizable processes. With a simple OpenTelemetry-native SDK, you can instantly trace every LLM call, capturing a rich tapestry of data including prompts, outputs, token usage, latency, costs, and the precise sequence of tool calls. This isn't just monitoring; it's about gaining profound, contextual insights. By grouping traces by user, session, or customer, Fallom helps you understand not just what your AI is doing, but who it's for and why it matters. Built with enterprise-scale compliance in mind, it offers the robust audit trails and model governance needed to navigate regulatory landscapes like the EU AI Act. Fallom empowers you to debug with confidence, allocate costs with precision, and ultimately build more reliable, efficient, and transparent AI systems.

Frequently Asked Questions

Crawlkit FAQ

What happens if a request fails?

Crawlkit operates on a "succeed or refund" principle. If an API request fails to return the complete, structured data as promised—due to a site being down, a block, or any other issue—the credits used for that request are automatically refunded to your account. This ensures you only pay for successful data delivery, making it risk-free to integrate into automated pipelines.

Do I need to manage proxies or browsers?

No, and that's one of the core benefits. Crawlkit completely abstracts away the infrastructure. The service manages large pools of rotating residential and data center proxies, headless browser instances, and all necessary configurations to navigate anti-bot measures. You simply make an API request and receive clean data, without any operational burden.

How is the data returned?

Data is returned as structured JSON via the API. The structure is clean, consistent, and well-documented for each endpoint (like /linkedin/company or /instagram/profile). For example, a LinkedIn company request might return fields like name, followers, employees, and description, ready to be inserted directly into your database or application logic.

Can I request a new data source or API endpoint?

Absolutely. Crawlkit encourages users to reach out with requests for new platforms or specific data points. Their philosophy, "Need an API we don't have yet? Talk to us, we'll build it," highlights a commitment to expanding their coverage based on user needs. This makes it a collaborative platform that grows with the curiosities and requirements of its developer community.

Fallom FAQ

How does Fallom integrate with my existing application?

Fallom is built on the open standard OpenTelemetry (OTEL), making integration remarkably straightforward. You simply install a single, lightweight SDK into your application code. This SDK automatically instruments your LLM calls—whether you use OpenAI, Anthropic, Google, or other providers—and sends the rich tracing data to the Fallom platform. This means no vendor lock-in and a setup process that can be completed in under five minutes, with no changes to your core application logic.

Can Fallom handle sensitive or private data?

Absolutely. Fallom is designed with enterprise-grade security and privacy controls. It offers a configurable "Privacy Mode" where you can choose to redact specific data fields, log only transaction metadata (like timestamps and token counts), or disable content capture entirely for sensitive environments. This allows you to maintain full observability over system performance and costs while ensuring user data and confidential information are protected according to your policies.

What makes Fallom different from traditional APM tools?

Traditional Application Performance Monitoring (APM) tools are built for conventional software, struggling to interpret the non-deterministic, language-heavy nature of LLM operations. Fallom is AI-native, meaning it understands concepts unique to this domain: it traces semantic prompts and completions, visualizes tool-call sequences, attributes costs per token, and evaluates output quality. It provides the specific context and metrics that AI engineers need, which generic APM tools simply cannot surface.

How does Fallom help with testing and quality assurance?

Fallom includes capabilities for running evaluations on your LLM outputs. You can define custom checks for accuracy, relevance, hallucination rates, or other metrics and run them against sampled or all production traces. This allows you to catch regressions in model performance or prompt effectiveness before they widely impact users. Coupled with its Prompt Store for versioning and A/B testing, it creates a robust framework for continuous improvement of your AI's quality.

Alternatives

Crawlkit Alternatives

Crawlkit is a modern web data extraction platform, squarely in the analytics and data category. It's designed for developers and data teams who need to bypass the complexities of proxies, browsers, and anti-bot systems to reliably gather web data through a simple API. Users often explore alternatives for a variety of reasons. This curiosity might be driven by specific budget constraints, a need for different feature sets like specialized data sources or integration capabilities, or simply a desire to evaluate the entire landscape before committing to a tool that will power critical data pipelines. When evaluating other options, it's wise to consider several key factors. Look for robust JavaScript rendering for modern websites, high success rates against anti-bot measures, and the scalability to handle your project's volume. The ideal platform should, like Crawlkit, let you focus on using data rather than wrestling with the mechanics of collecting it.

Fallom Alternatives

Fallom is a specialized observability platform for AI development, focusing on the unique challenges of monitoring Large Language Model and agent-based applications. It provides deep visibility into prompts, costs, and performance, helping teams build reliable and transparent AI systems. Developers and organizations often explore alternatives for various reasons. They might be seeking a different pricing model, a platform that integrates more tightly with their existing infrastructure, or a solution with a broader or narrower feature scope that better matches their specific stage of AI adoption. When evaluating other tools in this space, consider your core needs. Look for robust tracing capabilities, granular cost attribution, and compliance features if required. The ease of instrumentation and the depth of context provided for each AI interaction are also key factors that determine how effectively you can debug, optimize, and govern your LLM workloads.