Crawlkit

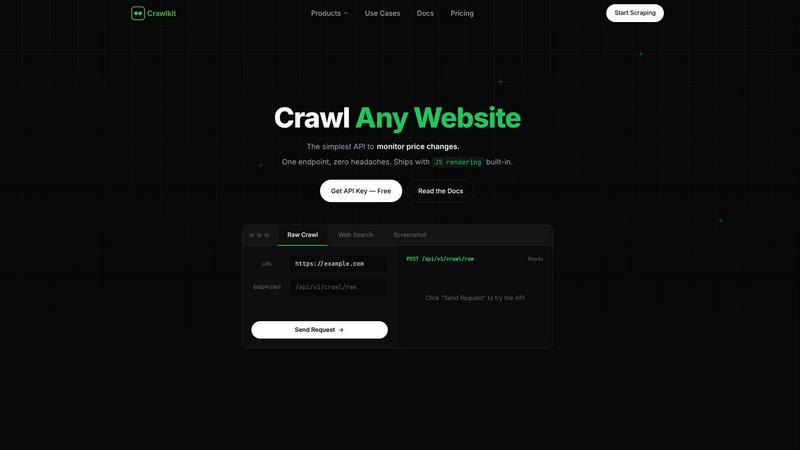

Crawlkit turns any website into structured data with a single, simple API call.

About Crawlkit

Ever wondered how to turn the vast, unstructured data of the web into a clean, reliable stream of information for your projects? Crawlkit is the answer. It's a developer-first web data extraction platform that transforms the complex, often frustrating task of web scraping into a simple API call. Imagine needing data from LinkedIn for sales intelligence, Instagram for social listening, or app stores for market analysis. Instead of wrestling with headless browsers, proxy rotations, and anti-bot countermeasures, Crawlkit abstracts all that infrastructure away. It provides a single, powerful interface to extract structured data from virtually any website or platform, including search engines, social media, and app stores. Built for developers and data teams, its core value proposition is profound simplicity and reliability. You focus entirely on leveraging the data—building applications, enriching CRMs, or training models—while Crawlkit handles the intricate mechanics of collection, parsing, and delivery. With transparent, pay-as-you-go pricing and credits that never expire, it invites you to start small, experiment freely, and scale your data pipelines without lock-in or surprise commitments.

Features of Crawlkit

Unified API for Diverse Sources

Crawlkit consolidates access to a wide array of data sources under one cohesive API. Whether you're curious about a company's details on LinkedIn, an influencer's metrics on Instagram, app reviews on the Play Store, or real-time web search results, you use the same simple interface. This eliminates the need to learn and maintain multiple scraping tools or adapt to different website structures, allowing you to satisfy your data curiosity across the entire digital landscape with consistent, predictable calls.

Infrastructure Abstraction

The platform expertly handles the most challenging aspects of modern web scraping behind the scenes. This includes managing rotating proxy pools to avoid IP blocks, executing JavaScript in headless browsers to render dynamic content, respecting rate limits, and parsing platform-specific HTML into clean JSON. This feature satisfies a deep curiosity: what if you could access the data without ever worrying about how it's fetched? Crawlkit makes this a reality, letting you explore data collection at scale without operational overhead.

Reliable & Complete Data Delivery

Crawlkit is engineered for reliability, ensuring you receive complete, usable data every time. It patiently waits for full page loads on complex single-page applications (SPAs) and validates all responses before delivery. This means you never have to wonder if a missing data field is due to a parsing error or a failed load. The guarantee of structured, complete outputs allows you to build robust systems and analyses with confidence, turning sporadic data glimpses into a steady stream of insight.

Transparent, Flexible Pricing

Curious about costs? Crawlkit employs a straightforward credit-based system where each API endpoint has a fixed cost, and you only pay for successful requests (with refunds on failures). There are no monthly commitments, no rate limits, and purchased credits never expire. This model encourages experimentation and scales with you, offering volume discounts for larger needs. It answers the fundamental question of value, providing clear, predictable pricing without hidden fees or punitive scaling.

Use Cases of Crawlkit

Competitive Intelligence and Market Research

Imagine automatically tracking your competitors' digital footprints. With Crawlkit, you can systematically monitor their LinkedIn company pages for employee growth and job postings, scrape their app store listings for feature updates and user sentiment, or analyze their public Instagram engagement. This continuous stream of structured data fuels dynamic dashboards and reports, satisfying a deep curiosity about market position and revealing strategic opportunities without manual, repetitive research.

Lead Generation and CRM Enrichment

Sales and marketing teams can transform raw lead lists into rich profiles. By feeding website URLs or company names into Crawlkit's LinkedIn API, you can automatically pull job titles, company information, professional summaries, and other key details to enrich contact records in your CRM. This process turns a simple list of names into a deep, actionable understanding of your potential customers, enabling highly personalized outreach and improving sales conversion rates.

Social Media Monitoring and Growth Tracking

For brands, agencies, or influencers, understanding social performance is key. Crawlkit allows you to programmatically track key Instagram metrics—like follower counts, post engagement rates, and content themes—for your own account or competitors'. Schedule weekly scripts to pull this data and visualize growth trends over time. This use case satisfies the curiosity of what content resonates most and provides data-driven insights to inform your social media strategy.

Product Development and Review Analysis

App developers and product managers can harness direct user feedback at scale. Use Crawlkit to pull all reviews from the Google Play Store or Apple App Store for your app or competing products. Analyze this structured data to uncover common pain points, feature requests, and sentiment trends. This turns the chaotic noise of user reviews into a clear signal, guiding your product roadmap and helping you understand exactly what users love or wish was different.

Frequently Asked Questions

What happens if a request fails?

Crawlkit operates on a "succeed or refund" principle. If an API request fails to return the complete, structured data as promised—due to a site being down, a block, or any other issue—the credits used for that request are automatically refunded to your account. This ensures you only pay for successful data delivery, making it risk-free to integrate into automated pipelines.

Do I need to manage proxies or browsers?

No, and that's one of the core benefits. Crawlkit completely abstracts away the infrastructure. The service manages large pools of rotating residential and data center proxies, headless browser instances, and all necessary configurations to navigate anti-bot measures. You simply make an API request and receive clean data, without any operational burden.

How is the data returned?

Data is returned as structured JSON via the API. The structure is clean, consistent, and well-documented for each endpoint (like /linkedin/company or /instagram/profile). For example, a LinkedIn company request might return fields like name, followers, employees, and description, ready to be inserted directly into your database or application logic.

Can I request a new data source or API endpoint?

Absolutely. Crawlkit encourages users to reach out with requests for new platforms or specific data points. Their philosophy, "Need an API we don't have yet? Talk to us, we'll build it," highlights a commitment to expanding their coverage based on user needs. This makes it a collaborative platform that grows with the curiosities and requirements of its developer community.

Explore more in this category:

Similar to Crawlkit

Subiq

Subiq reveals where your SaaS budget is bleeding, tracking every subscription and renewal for small teams in one dashboard.

Toon Tone

Toon Tone is a fun daily game where you guess cartoon character colors using HSB sliders to boost your color memory skills.

FX Radar

FX Radar lets you discover why markets move with live news and AI analysis, giving you clarity in seconds.

GhostlyX Privacy-First Web Analytics

Discover what your website visitors truly need with GhostlyX, the privacy-first analytics platform that delivers clear insights without tracking.

Pterocos

Discover a free, live HTML, CSS, and JS playground with an AI chat assistant and a powerful Monaco editor for rapid coding and testing.

Microplastic Intake App

Research-backed microplastic intake assessment.

Webleadr

Discover and connect with web design leads and businesses without websites worldwide, all in just a few clicks with Webleadr.

Metric Nexus

Metric Nexus simplifies your marketing data by unifying it in one place and allowing you to ask questions in plain English with Claude.