Agenta vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

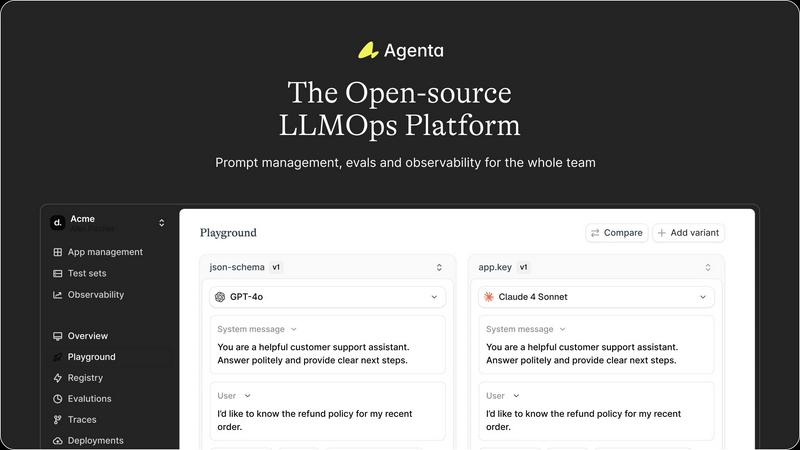

Discover how Agenta's open-source platform helps teams build and manage reliable LLM applications together.

Last updated: March 1, 2026

OpenMark AI lets you benchmark over 100 LLMs on your specific tasks, providing instant insights into cost, speed, quality, and stability.

Last updated: March 26, 2026

Visual Comparison

Agenta

OpenMark AI

Feature Comparison

Agenta

Unified Playground & Experimentation

Dive into a centralized workspace where you can experiment with different prompts, parameters, and foundation models side-by-side. This unified playground allows your entire team to iterate rapidly, compare results in real-time, and maintain a complete version history of every change. Found a problematic output in production? Simply save it to a test set and immediately begin debugging it within the same interactive environment, seamlessly closing the loop between observation and experimentation.

Automated & Holistic Evaluation

Replace intuition with evidence through a systematic evaluation framework. Agenta enables you to create automated test suites using LLM-as-a-judge, custom code evaluators, or built-in metrics. Crucially, it evaluates the full trace of complex AI agents, allowing you to scrutinize each intermediate step in the reasoning process, not just the final output. This deep visibility ensures you can validate that changes genuinely improve performance before they ever reach a user.

Production Observability & Debugging

Gain crystal-clear visibility into your live AI applications. Agenta traces every request, providing a detailed map of your LLM's execution. When errors occur, you can pinpoint the exact failure point—was it the prompt, the model, or a specific function? Furthermore, you can annotate traces with your team or gather direct feedback from users, and with a single click, turn any problematic trace into a permanent test case for future experiments.

Collaborative Workflow for Cross-Functional Teams

Break down the walls between technical and non-technical stakeholders. Agenta provides a safe, intuitive UI for domain experts and product managers to directly edit prompts, run evaluations, and compare experiments without writing code. This fosters true collaboration, ensuring the people with the deepest subject matter expertise can actively shape the AI's behavior, while developers maintain full API and UI parity for programmatic control.

OpenMark AI

User-Friendly Task Configuration

OpenMark AI boasts a straightforward task configuration interface, allowing users to describe the tasks they want to benchmark in simple language. This eliminates the need for technical knowledge, making it accessible to all team members.

Comprehensive Model Comparison

The platform supports benchmarking against over 100 AI models, providing users with the ability to compare real-time results across a diverse range of tasks. This feature ensures that teams can find the best-performing model for their specific needs.

Real-Time Performance Metrics

Users can evaluate crucial performance metrics like cost per request and latency during benchmarking sessions. This data allows teams to understand the economic implications of their choices and helps in selecting models that deliver the best value.

Consistency Checks

OpenMark AI enables users to test the consistency of model outputs by running the same task multiple times. This feature is vital for teams that require reliable and repeatable results, ensuring that they can trust the models they choose.

Use Cases

Agenta

Streamlining Enterprise Chatbot Development

Imagine a financial services company building a customer support chatbot. With Agenta, product managers can draft and tweak prompt variations in the UI to ensure compliant and helpful tones, while developers integrate different models from OpenAI or Anthropic. The team can systematically evaluate each version against a test suite of tricky customer queries, monitor its performance in a staging environment, and quickly debug any hallucinated or incorrect advice before a full rollout.

Building and Tuning Complex AI Agents

For teams developing sophisticated multi-step agents that handle tasks like research or data analysis, Agenta is indispensable. Developers can use the platform to trace the agent's entire chain of thought, identifying which tool call or reasoning step failed. They can create evaluations that assess the quality of each intermediate result, not just the final answer, enabling precise tuning of the agent's logic and prompts for maximum reliability.

Managing Rapid Prompt Iteration for Content Generation

A marketing team using LLMs to generate ad copy or blog posts can use Agenta as their central experimentation hub. Writers and marketers can collaborate with engineers to A/B test different creative prompts and models, evaluating outputs for brand voice, SEO effectiveness, and engagement. All successful prompts are versioned and stored, creating a reusable library of high-performing templates that accelerate future content creation.

Academic Research and LLM Benchmarking

Researchers and data scientists can leverage Agenta to conduct rigorous, reproducible experiments. The platform allows them to manage countless prompt and parameter combinations, run large-scale automated evaluations against standardized benchmarks, and meticulously track results. This structured approach turns ad-hoc research into a formalized process, making it easier to validate hypotheses and publish findings.

OpenMark AI

Model Selection for AI Features

Teams can use OpenMark AI to systematically evaluate different models to find the most suitable option for their intended AI features. This helps ensure that the chosen model aligns with both performance and cost expectations.

Cost Analysis for API Usage

By comparing the actual costs associated with different models, teams can make informed financial decisions about which APIs to use. This is particularly useful for budgeting and resource allocation in projects.

Quality Assurance in AI Outputs

OpenMark AI allows teams to assess the quality of outputs across various models, helping to ensure that the final product meets user expectations and project requirements. This is crucial for maintaining high standards in AI applications.

Benchmarking for Research and Development

OpenMark AI serves as a powerful tool for R&D teams looking to explore the capabilities of emerging models. By benchmarking new technologies, teams can stay ahead of the curve and innovate more effectively.

Overview

About Agenta

What if the journey of building with large language models felt less like a perilous expedition and more like a guided discovery? Agenta is an open-source LLMOps platform crafted to illuminate the path for AI teams navigating the complex terrain of modern LLM development. It transforms the often chaotic and intuitive art of prompt engineering into a structured, collaborative, and evidence-based science. At its heart, Agenta addresses a fundamental paradox: while LLMs are inherently stochastic and unpredictable, the processes teams use to manage, evaluate, and deploy them should be anything but. It serves as the central nervous system for cross-functional teams—including engineers, product managers, and domain experts—who are determined to move beyond scattered prompts in Slack, siloed workflows, and risky "vibe testing." By integrating prompt management, automated evaluation, and production observability into a single, cohesive environment, Agenta becomes the single source of truth for the entire LLM application lifecycle. Its core mission is to empower teams to experiment swiftly, evaluate rigorously, and debug confidently, ultimately turning guesswork into reliable development and shipping robust, high-performing AI applications faster.

About OpenMark AI

OpenMark AI is an innovative web application designed for the benchmarking of large language models (LLMs) at the task level. It empowers developers and product teams to conduct thorough assessments of various AI models by simply describing the tasks they wish to evaluate in plain language. With OpenMark AI, users can run identical prompts against a wide array of models in a single session, enabling direct comparisons across several critical metrics such as cost per request, latency, scored quality, and consistency across multiple runs. This capability allows teams to identify variance in outputs, ensuring they do not rely on a single fortunate response but rather on comprehensive data.

What sets OpenMark AI apart is its user-friendly interface and ease of use. There's no need for complex API configurations or coding; everything is handled within the platform. This makes it ideal for those who need to validate their model choices before deploying AI features. By using real API calls instead of cached data, OpenMark AI provides insights into the actual performance and cost-efficiency of models, guiding users toward informed decisions tailored to specific workflows. With free and paid plans available, OpenMark AI is accessible for teams worldwide looking to optimize their AI implementations.

Frequently Asked Questions

Agenta FAQ

Is Agenta really open-source?

Yes, Agenta is fully open-source. You can dive into the codebase on GitHub, contribute to its development, and self-host the entire platform on your own infrastructure. This ensures there is no vendor lock-in and provides full transparency into how the platform operates, aligning with the needs of many development and research teams.

How does Agenta handle different LLM providers and frameworks?

Agenta is designed to be model-agnostic and framework-flexible. It seamlessly integrates with major providers like OpenAI, Anthropic, and Cohere, as well as popular development frameworks such as LangChain and LlamaIndex. This means you can use the best model for your specific task and switch providers as needed, all within Agenta's consistent management and evaluation workflow.

Can non-technical team members really use Agenta effectively?

Absolutely. A core design principle of Agenta is to democratize the LLM development process. The platform offers an intuitive web UI that allows product managers, domain experts, and other non-coders to safely edit prompts, launch evaluation tests, and visually compare experiment results. This bridges the gap between technical implementation and subject matter expertise.

How does Agenta help with debugging production issues?

When an error occurs in a live application, Agenta's observability traces capture the complete request lifecycle. You can examine the exact prompt sent, the model's raw response, and the output of any intermediate steps. This detailed traceability transforms debugging from a guessing game into a precise investigation, allowing you to quickly identify whether the root cause was a prompt ambiguity, a model limitation, or an integration error.

OpenMark AI FAQ

What types of tasks can I benchmark with OpenMark AI?

OpenMark AI supports a wide variety of tasks, including but not limited to classification, translation, data extraction, research Q&A, and image analysis. This versatility allows users to test models across many applications.

Do I need to configure API keys to use OpenMark AI?

No, OpenMark AI simplifies the benchmarking process by eliminating the need for users to configure separate API keys for different models. The platform handles this automatically, allowing for a seamless experience.

How can I ensure the consistency of model outputs?

OpenMark AI allows users to run multiple iterations of the same task, enabling teams to evaluate the consistency of outputs. This feature is essential for applications where reliability and predictability are crucial.

Are there any costs associated with using OpenMark AI?

OpenMark AI offers both free and paid plans, with details available in the in-app billing section. This provides flexibility for teams of different sizes and budgets, ensuring that everyone can access powerful benchmarking tools.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed to bring order and collaboration to the often chaotic process of building applications with large language models. It acts as a central hub for teams to experiment, evaluate, and manage their LLM prompts and workflows in a structured, evidence-based way. Users often explore alternatives for various reasons. Some may need a solution with different pricing models, whether a fully managed service or a different open-source license. Others might seek specific integrations, deployment options, or feature sets that align more closely with their team's unique workflow or technical stack. When evaluating options, it's wise to consider your team's core needs. Look for tools that foster collaboration across roles, provide robust testing and evaluation capabilities, and offer the flexibility to work with multiple AI models. The goal is to find a platform that turns the unpredictable nature of LLM development into a reliable, repeatable engineering practice.

OpenMark AI Alternatives

OpenMark AI is a web application designed for task-level benchmarking of large language models (LLMs). It enables users to evaluate over 100 models based on cost, speed, quality, and stability, all in a seamless browser-based environment. This platform is particularly valuable for developers and product teams who need to make informed decisions about which AI model to implement, ensuring that both performance and cost-effectiveness are considered. Users often seek alternatives to OpenMark AI due to various factors such as pricing structures, feature sets, and specific platform requirements. When exploring other options, it's important to consider aspects like ease of use, the breadth of model support, and how well the alternative addresses your unique benchmarking needs. A thorough understanding of these elements can help streamline your decision-making process in selecting the best tool for your AI projects.