Agenta vs CloudBurn

Side-by-side comparison to help you choose the right AI tool.

Discover how Agenta's open-source platform helps teams build and manage reliable LLM applications together.

Last updated: March 1, 2026

CloudBurn

Discover what your code changes will cost before they deploy to production.

Last updated: March 1, 2026

Visual Comparison

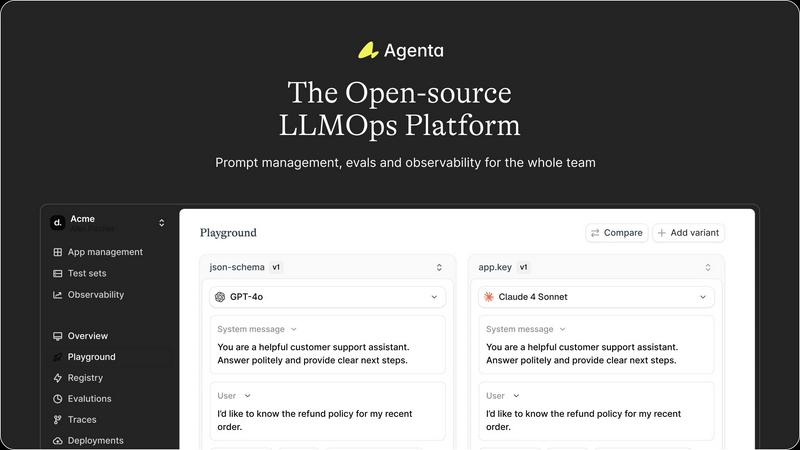

Agenta

CloudBurn

Feature Comparison

Agenta

Unified Playground & Experimentation

Dive into a centralized workspace where you can experiment with different prompts, parameters, and foundation models side-by-side. This unified playground allows your entire team to iterate rapidly, compare results in real-time, and maintain a complete version history of every change. Found a problematic output in production? Simply save it to a test set and immediately begin debugging it within the same interactive environment, seamlessly closing the loop between observation and experimentation.

Automated & Holistic Evaluation

Replace intuition with evidence through a systematic evaluation framework. Agenta enables you to create automated test suites using LLM-as-a-judge, custom code evaluators, or built-in metrics. Crucially, it evaluates the full trace of complex AI agents, allowing you to scrutinize each intermediate step in the reasoning process, not just the final output. This deep visibility ensures you can validate that changes genuinely improve performance before they ever reach a user.

Production Observability & Debugging

Gain crystal-clear visibility into your live AI applications. Agenta traces every request, providing a detailed map of your LLM's execution. When errors occur, you can pinpoint the exact failure point—was it the prompt, the model, or a specific function? Furthermore, you can annotate traces with your team or gather direct feedback from users, and with a single click, turn any problematic trace into a permanent test case for future experiments.

Collaborative Workflow for Cross-Functional Teams

Break down the walls between technical and non-technical stakeholders. Agenta provides a safe, intuitive UI for domain experts and product managers to directly edit prompts, run evaluations, and compare experiments without writing code. This fosters true collaboration, ensuring the people with the deepest subject matter expertise can actively shape the AI's behavior, while developers maintain full API and UI parity for programmatic control.

CloudBurn

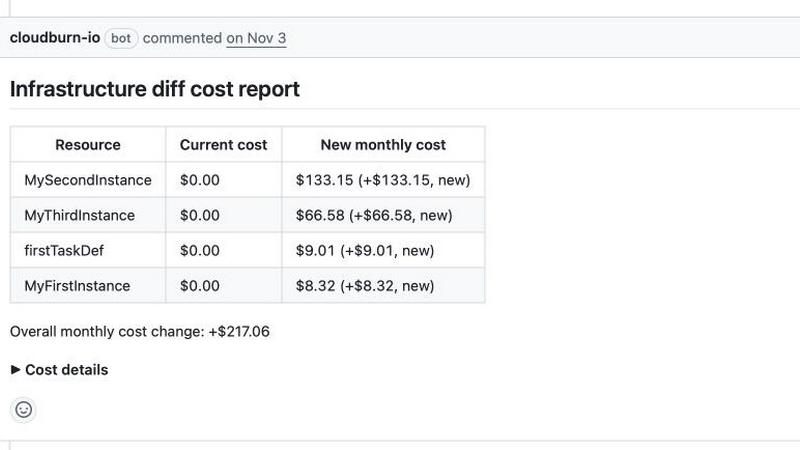

Automated Pull Request Cost Analysis

Imagine every infrastructure change being automatically audited for its financial impact. CloudBurn integrates directly with your GitHub workflow to analyze the diff in every pull request. Using live AWS pricing data, it calculates the exact monthly cost delta of your proposed changes and posts a clear, itemized report as a comment. This happens within seconds, turning cost awareness into a natural, non-disruptive part of your team's review process without any manual intervention.

Real-Time AWS Pricing Intelligence

How can you trust a cost estimate if it's based on outdated data? CloudBurn eliminates this uncertainty by pulling the very latest pricing information directly from AWS. This ensures that every cost projection for resources like EC2 instances, Fargate tasks, or RDS databases is accurate and reflective of current on-demand rates, giving you confidence that the numbers you see are the numbers you'll get.

Seamless Integration with Terraform & AWS CDK

Wondering how to fit a new tool into your existing IaC workflow? CloudBurn is designed to work natively with the tools you already use. By simply adding a GitHub Action for either Terraform Plan or AWS CDK Diff, you connect your pipeline. The tool automatically detects the output, sends it for analysis, and delivers the cost report, requiring no changes to your core development practices or codebase.

Detailed, Actionable Cost Breakdowns

A single total cost figure is helpful, but what if you need to understand the why behind it? CloudBurn provides granular, line-item breakdowns for every resource change. You can see the hourly rate, usage type, and a plain-language description for each component, enabling developers to make informed optimization decisions, like downsizing an over-provisioned instance, right during the code review.

Use Cases

Agenta

Streamlining Enterprise Chatbot Development

Imagine a financial services company building a customer support chatbot. With Agenta, product managers can draft and tweak prompt variations in the UI to ensure compliant and helpful tones, while developers integrate different models from OpenAI or Anthropic. The team can systematically evaluate each version against a test suite of tricky customer queries, monitor its performance in a staging environment, and quickly debug any hallucinated or incorrect advice before a full rollout.

Building and Tuning Complex AI Agents

For teams developing sophisticated multi-step agents that handle tasks like research or data analysis, Agenta is indispensable. Developers can use the platform to trace the agent's entire chain of thought, identifying which tool call or reasoning step failed. They can create evaluations that assess the quality of each intermediate result, not just the final answer, enabling precise tuning of the agent's logic and prompts for maximum reliability.

Managing Rapid Prompt Iteration for Content Generation

A marketing team using LLMs to generate ad copy or blog posts can use Agenta as their central experimentation hub. Writers and marketers can collaborate with engineers to A/B test different creative prompts and models, evaluating outputs for brand voice, SEO effectiveness, and engagement. All successful prompts are versioned and stored, creating a reusable library of high-performing templates that accelerate future content creation.

Academic Research and LLM Benchmarking

Researchers and data scientists can leverage Agenta to conduct rigorous, reproducible experiments. The platform allows them to manage countless prompt and parameter combinations, run large-scale automated evaluations against standardized benchmarks, and meticulously track results. This structured approach turns ad-hoc research into a formalized process, making it easier to validate hypotheses and publish findings.

CloudBurn

Preventing Costly Misconfigurations in PR Reviews

The most effective way to manage cloud spend is to stop it at the source. Engineering teams use CloudBurn to catch expensive mistakes—like accidentally provisioning a dozen xlarge instances instead of micros—before the code merges. This shifts cost governance left, empowering developers with the data to self-correct and preventing heart-stopping surprises on the monthly invoice.

Enabling Data-Driven Architecture Discussions

How often do design debates hinge on performance but ignore cost? With CloudBurn, teams can elevate their architectural discussions. When proposing a new microservices design or database solution, the immediate cost impact is visible to everyone in the PR. This allows for balanced conversations that consider both technical merit and financial sustainability from the earliest stages.

Streamlining FinOps and Budget Forecasting

For platform and FinOps teams, manually forecasting the cost of upcoming projects is a tedious chore. CloudBurn automates this estimation process. By analyzing the infrastructure code slated for development, teams can generate accurate, code-based forecasts, improving budget accuracy and providing clear financial accountability for each project or feature team.

Educating Developers on Cloud Cost Implications

Many developers write infrastructure code without a clear understanding of the financial weight of their decisions. CloudBurn acts as a continuous learning tool. With every PR comment, developers receive immediate feedback on the cost consequences of their code, fostering a culture of cost consciousness and building institutional knowledge over time.

Overview

About Agenta

What if the journey of building with large language models felt less like a perilous expedition and more like a guided discovery? Agenta is an open-source LLMOps platform crafted to illuminate the path for AI teams navigating the complex terrain of modern LLM development. It transforms the often chaotic and intuitive art of prompt engineering into a structured, collaborative, and evidence-based science. At its heart, Agenta addresses a fundamental paradox: while LLMs are inherently stochastic and unpredictable, the processes teams use to manage, evaluate, and deploy them should be anything but. It serves as the central nervous system for cross-functional teams—including engineers, product managers, and domain experts—who are determined to move beyond scattered prompts in Slack, siloed workflows, and risky "vibe testing." By integrating prompt management, automated evaluation, and production observability into a single, cohesive environment, Agenta becomes the single source of truth for the entire LLM application lifecycle. Its core mission is to empower teams to experiment swiftly, evaluate rigorously, and debug confidently, ultimately turning guesswork into reliable development and shipping robust, high-performing AI applications faster.

About CloudBurn

What if you could peer into the financial future of your infrastructure code before it ever runs? CloudBurn is a transformative tool designed for engineering teams who use Terraform or AWS CDK to manage their cloud infrastructure. It addresses a critical, often painful gap in the development lifecycle: the disconnect between writing infrastructure-as-code and understanding its cost implications. Traditionally, teams discover budget overruns weeks later on their AWS bill, long after resources are provisioned and costs are accumulating. CloudBurn fundamentally changes this dynamic by injecting real-time cost intelligence directly into the code review process. Whenever a developer opens a pull request with infrastructure changes, CloudBurn automatically analyzes the diff using live AWS pricing data and posts a detailed cost report as a comment. This creates a powerful feedback loop, empowering teams to discuss, optimize, and adjust expensive configurations while the changes are still in development and easy to modify. It’s a proactive shield against budgetary surprises, transforming cost management from a reactive, finance-led scramble into an integrated, engineering-led practice. It’s for any team that has ever wondered, "How much will this new architecture actually cost?" and wants an immediate, accurate answer.

Frequently Asked Questions

Agenta FAQ

Is Agenta really open-source?

Yes, Agenta is fully open-source. You can dive into the codebase on GitHub, contribute to its development, and self-host the entire platform on your own infrastructure. This ensures there is no vendor lock-in and provides full transparency into how the platform operates, aligning with the needs of many development and research teams.

How does Agenta handle different LLM providers and frameworks?

Agenta is designed to be model-agnostic and framework-flexible. It seamlessly integrates with major providers like OpenAI, Anthropic, and Cohere, as well as popular development frameworks such as LangChain and LlamaIndex. This means you can use the best model for your specific task and switch providers as needed, all within Agenta's consistent management and evaluation workflow.

Can non-technical team members really use Agenta effectively?

Absolutely. A core design principle of Agenta is to democratize the LLM development process. The platform offers an intuitive web UI that allows product managers, domain experts, and other non-coders to safely edit prompts, launch evaluation tests, and visually compare experiment results. This bridges the gap between technical implementation and subject matter expertise.

How does Agenta help with debugging production issues?

When an error occurs in a live application, Agenta's observability traces capture the complete request lifecycle. You can examine the exact prompt sent, the model's raw response, and the output of any intermediate steps. This detailed traceability transforms debugging from a guessing game into a precise investigation, allowing you to quickly identify whether the root cause was a prompt ambiguity, a model limitation, or an integration error.

CloudBurn FAQ

How does CloudBurn calculate the cost estimates?

CloudBurn calculates estimates by parsing the infrastructure diff from your Terraform plan or AWS CDK synthesis output. It identifies the specific AWS resources being added, modified, or removed. Then, it cross-references these resources with real-time pricing data from AWS's own pricing API, applying the appropriate rates based on region, instance type, and other configurations to generate a projected monthly cost.

Is my code or cloud credentials exposed to CloudBurn?

No, your sensitive code and AWS credentials remain secure within your GitHub environment. CloudBurn operates by receiving only the textual output of your terraform plan or cdk diff command via a GitHub Action. Your actual Terraform state files, AWS access keys, or repository code are never transmitted to CloudBurn's servers, ensuring a secure and compliant workflow.

What infrastructure-as-code tools does CloudBurn support?

Currently, CloudBurn offers dedicated, seamless support for two of the most popular IaC frameworks: HashiCorp Terraform and the AWS Cloud Development Kit (AWS CDK). Support is implemented through easy-to-use GitHub Actions that capture the plan or diff output specific to each tool, making integration straightforward for teams using either standard.

Can CloudBurn analyze costs for existing infrastructure?

The primary focus of CloudBurn is on analyzing changes—the diff in a pull request. It is designed for pre-deployment cost visibility. For comprehensive cost management and analysis of your already-deployed, full infrastructure stack, you would typically use a cloud provider's native cost tool (like AWS Cost Explorer) or a dedicated cloud cost management platform.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed to bring order and collaboration to the often chaotic process of building applications with large language models. It acts as a central hub for teams to experiment, evaluate, and manage their LLM prompts and workflows in a structured, evidence-based way. Users often explore alternatives for various reasons. Some may need a solution with different pricing models, whether a fully managed service or a different open-source license. Others might seek specific integrations, deployment options, or feature sets that align more closely with their team's unique workflow or technical stack. When evaluating options, it's wise to consider your team's core needs. Look for tools that foster collaboration across roles, provide robust testing and evaluation capabilities, and offer the flexibility to work with multiple AI models. The goal is to find a platform that turns the unpredictable nature of LLM development into a reliable, repeatable engineering practice.

CloudBurn Alternatives

CloudBurn is a specialized tool in the development and DevOps category, designed to bring real-time cloud cost intelligence directly into the infrastructure-as-code workflow. It analyzes Terraform or AWS CDK changes in pull requests to forecast AWS costs before code ever reaches production, transforming cost management from a reactive finance task into a proactive engineering practice. Users often explore alternatives for various reasons. Some may seek different pricing models or have budget constraints that require a more basic solution. Others might need support for additional cloud providers beyond AWS, or require deeper integration with CI/CD platforms other than GitHub. The specific feature set, such as the depth of cost analysis or reporting capabilities, can also drive the search for a different tool. When evaluating options, it's wise to consider a few key areas. Look for accurate, up-to-date pricing data that reflects your actual usage and regions. Seamless integration into your existing developer workflow is crucial for adoption, ensuring the tool provides value without becoming a burden. Finally, consider the clarity and actionability of the insights provided; the best tools empower teams to make informed decisions quickly, right where they code.