JSON to Video vs Seedance 2 AI Video Generator

Side-by-side comparison to help you choose the right AI tool.

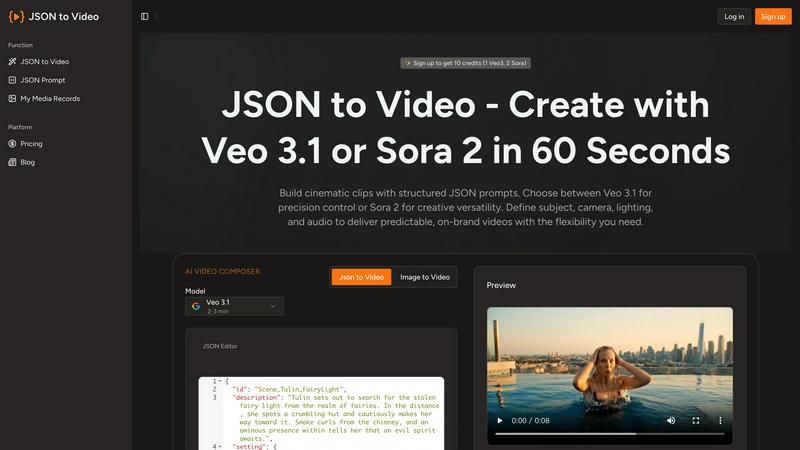

JSON to Video

Discover how structured JSON prompts unlock predictable, cinematic videos in just sixty seconds.

Last updated: March 1, 2026

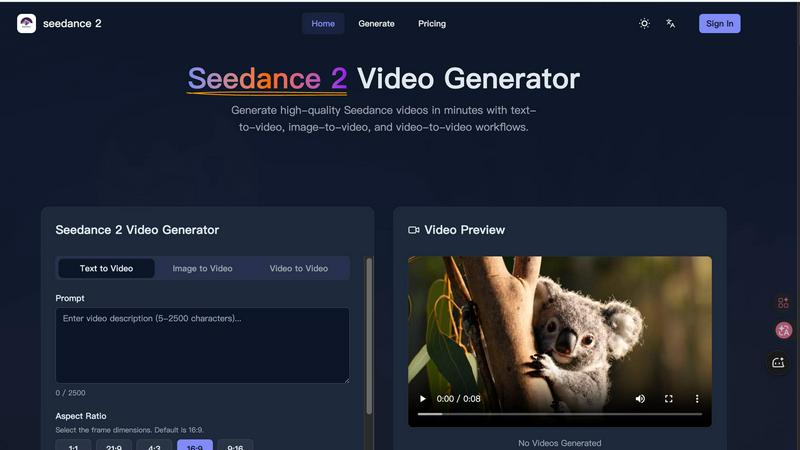

Discover how Seedance 2 AI transforms your text and images into cinematic videos with ease.

Last updated: March 1, 2026

Visual Comparison

JSON to Video

Seedance 2 AI Video Generator

Feature Comparison

JSON to Video

Structured JSON Schema for Precision

The cornerstone of JSON to Video is its comprehensive JSON schema, which acts as a blueprint for your video. Each field corresponds to a specific visual or auditory parameter, allowing you to define elements like shot composition, camera movement, subject description, wardrobe, scene location, and audio mix with incredible detail. This structure eliminates the ambiguity of traditional text prompts, ensuring the AI model interprets your creative direction accurately and consistently, leading to predictable and high-quality outputs every time.

Multi-Model Support (Veo 3.1, Seedance 2, etc.)

The platform offers the flexibility to leverage the unique strengths of multiple state-of-the-art generative video models. You are not locked into a single AI's interpretation. Whether you need the cinematic quality of Veo 3.1, the dynamic motion of Seedance 2, or the capabilities of Wan 2.6 and Kling 2.6, you can choose the best tool for your specific project. This allows for exploratory creativity, letting you see how different models render the same structured prompt.

Detailed Cinematic Control

Go beyond basic subject matter. The schema provides granular control over true cinematic elements. You can specify the lens type (e.g., "35mm with cinematic softness"), frame rate, camera movements like "smooth slider pans," intricate lighting setups, and a detailed color palette. This level of control empowers users to craft videos with a specific tone and professional aesthetic, making it feel less like AI generation and more like digital filmmaking.

Integrated Audio and Timeline Specification

JSON to Video understands that sound is half the experience. The schema includes dedicated audio sections for music, ambient sound, and sound effects, complete with mix levels. Furthermore, the timeline array allows you to break down the video sequence by seconds, describing the action and visual progression moment-by-moment. This feature is perfect for storyboarding complex narratives and ensuring the audio-visual elements are perfectly synchronized from start to finish.

Seedance 2 AI Video Generator

Multimodal Generation Engine

Seedance 2.0 doesn't limit your starting point. Explore video creation through three distinct pathways: Text-to-Video for pure concept ideation, Image-to-Video for maintaining strict style and character consistency from a reference, and Video-to-Video for intelligently restyling or extending existing footage. This flexibility lets you choose the most efficient mode for your creative task, whether you're building from scratch or evolving existing assets.

Reference-First Creative Control

One of the most intriguing aspects of Seedance 2.0 is its emphasis on visual anchors. Instead of relying solely on textual prompts, you can upload a reference image or video clip to guide the AI. This dramatically reduces ambiguity, ensuring the generated video faithfully adopts the desired character design, artistic style, color palette, and even camera angle feel, leading to more professional and on-brand results with less iterative guesswork.

Integrated Audio-Video Synthesis

Why create the video and sound separately? Seedance 2.0 can generate synchronized audio in a single pass, a feature that sets it apart. This includes background sound effects (SFX), musical scores, and even voice dialogue with lip-sync support for over 10 languages. This holistic approach streamlines production, allowing you to explore complete audiovisual narratives from a single prompt or reference.

Production-Ready Output Specifications

Curious about the technical quality? Seedance 2.0 is engineered for professional use. It delivers videos up to 1080p resolution at a cinema-standard 24 frames per second for smooth motion. You can generate clips from 5 to 10 seconds (with extension capabilities) in versatile aspect ratios like 16:9, 9:16, and 1:1, ensuring your content is optimized for any platform, from YouTube to TikTok, all exported in the universally compatible MP4 format.

Use Cases

JSON to Video

Branded Marketing and Advertisement Clips

Marketers can produce on-brand video content with remarkable consistency. By codifying brand guidelines—such as color palettes, lighting moods, and character styles—into a JSON template, teams can generate countless ad variations, product showcases, or social media clips that all maintain perfect brand alignment. This allows for rapid A/B testing of different scenarios while ensuring visual coherence across an entire campaign.

Narrative Short Film and Storyboarding

Independent filmmakers and writers can use the platform as a dynamic storyboarding tool. The structured prompt allows for pre-visualizing complex scenes, experimenting with different cinematography choices, and understanding the flow of a narrative before live production. It’s a sandbox for visualizing script elements, from character actions and props to camera angles and environmental transitions, bringing written stories to life quickly.

Educational and Explainer Content

Educators and instructional designers can create engaging explainer videos with clear, consistent visual metaphors. By structuring the lesson into a timeline with defined scenes and subjects, complex topics can be broken down into digestible, visually compelling segments. The predictable output ensures that key educational elements are always presented clearly, enhancing learning retention.

Product Visualization and Prototyping

Designers and architects can visualize products or spaces in cinematic environments. Imagine showcasing a new piece of furniture or a room layout through a curated video sequence, with controlled lighting to highlight features and specific camera movements to guide the viewer's eye. This use case transforms static designs into immersive experiences, ideal for client presentations and concept validation.

Seedance 2 AI Video Generator

Rapid Social Media Content Creation

For social media managers and content creators, Seedance 2.0 is a powerhouse for exploration. Quickly generate a variety of engaging, platform-specific video clips for Instagram Reels, TikTok, or YouTube Shorts. Experiment with different visual styles and narratives based on trending topics or brand campaigns, producing a high volume of polished content without the need for filming or intensive editing.

Dynamic Advertising and Marketing Campaigns

Marketing teams can delve into rapid prototyping for ad concepts. Use the image-to-video mode to animate key brand visuals or product shots, creating compelling video ads that maintain perfect stylistic consistency. The ability to quickly iterate on different versions allows for efficient A/B testing of visual themes and messages before committing to a high-cost production.

Concept Visualization and Storyboarding

Filmmakers, writers, and designers can use Seedance 2.0 as a brainstorming companion. Transform descriptive text from a script or a mood board image into a moving scene. This provides a tangible, explorative glimpse into how a concept might look in motion, facilitating better communication and creative decision-making in the early stages of a project.

Educational and Explainer Video Production

Educators and instructional designers can explore new ways to explain complex subjects. Generate short, visually engaging clips to illustrate abstract concepts, historical events, or scientific processes. The video-to-video mode can also be used to repurpose or modernize existing lecture footage or presentation slides into more dynamic and engaging educational content.

Overview

About JSON to Video

What if you could direct an AI video model with the precision of a film director, not the guesswork of a prompt engineer? JSON to Video is a groundbreaking platform that unlocks this exact possibility. It transforms the often-frustrating process of text-to-video generation into a structured, predictable, and deeply creative workflow. By using a detailed JSON schema instead of ambiguous text prompts, you gain meticulous control over every cinematic element. Imagine filling out a digital storyboard where you can specify the exact subject, camera movements, lens type, lighting conditions, color grading, soundtrack, and even subtle sound effects. This platform is specifically engineered to interpret your structured data payload and translate it faithfully into high-quality video clips using cutting-edge models like Veo 3.1, Seedance 2, Wan 2.6, and Kling 2.6. It's designed for creators, marketers, educators, and brands who are curious about AI video but demand reliability and alignment with their vision. The core value proposition is profound: replace randomness with repeatability, and transform structured data into stunning, cinematic scenes in as little as 60 seconds. It invites you to explore a new language of visual creation, one where your intent is clearly understood and executed.

About Seedance 2 AI Video Generator

What if you could conjure cinematic scenes from a simple thought, a single image, or an existing clip? Seedance 2.0 is the key to that creative portal. It's a powerful, all-in-one AI video generator designed to transform text prompts, reference images, or source footage into polished, professional-grade video clips with remarkable speed and control. This tool moves beyond basic generation, focusing on delivering consistency, smoother motion, and a workflow that feels intuitive rather than intimidating. It's built specifically for creators, marketers, and agencies who are curious about the potential of AI video but need reliable, high-quality output without getting bogged down in complex editing software. The core value proposition of Seedance 2.0 lies in its reference-first philosophy and multimodal flexibility. By allowing you to anchor your vision with a visual or video reference, it reduces the guesswork of prompt engineering, leading to more predictable and stylistically coherent results. Whether you're exploring a new concept from text, extending a brand's visual identity from an image, or restyling existing footage, Seedance 2.0 provides a fast track from inspiration to a finished, cinematic video ready for social media, advertising, or product demos.

Frequently Asked Questions

JSON to Video FAQ

What is the main advantage of using JSON over a text prompt?

The main advantage is precision and predictability. A text prompt like "a person in a room" is open to vast interpretation by an AI. A JSON schema allows you to specify that the person is an "adult in simple, clean clothing," the room is a "modern living room with ambient shelves," the camera uses a "35mm lens," and the movement is a "smooth slider pan." This structured approach drastically reduces guesswork and ensures the output closely matches your detailed vision.

Do I need to be a programmer to use JSON to Video?

Not at all. While familiarity with JSON's basic structure (key-value pairs in braces) is helpful, the platform is designed to be accessible. The website provides clear templates and examples for various genres (Action, Ad, Narrative, etc.) that you can copy and modify. You simply fill in the values for each field, much like completing a detailed form, without needing to write complex code from scratch.

Which AI video models does JSON to Video support?

The platform supports several leading generative video models, including Google's Veo 3.1, Kuaishou's Seedance 2, as well as Wan 2.6 and Kling 2.6. This multi-model approach gives you creative flexibility, allowing you to select the model that best suits the style or motion requirements of your specific project, all within the same structured prompting interface.

Can I control the audio and sound design?

Yes, audio control is a core feature. The JSON schema includes a dedicated "audio" section where you can describe the music style (e.g., "soft, ascending ambient pad"), ambient sounds, specific sound effects, and their mix levels. This allows for the creation of a cohesive audio-visual experience, where the soundtrack and effects are integral to the scene's mood and action, not an afterthought.

Seedance 2 AI Video Generator FAQ

What input methods does Seedance 2.0 support?

Seedance 2.0 offers three primary input methods for maximum creative exploration. You can start with a detailed text prompt (up to 2500 characters), upload a reference image to guide style and composition, or provide a source video clip to restyle or inspire a new generation. This multimodal approach allows you to begin your video creation journey from whatever spark of inspiration you have.

How does Seedance 2.0 ensure character and style consistency?

The model excels at consistency through its reference-first workflow. When you provide a source image or video, Seedance 2.0 uses it as a strong anchor point. This means the AI analyzes the visual elements—like a character's appearance, clothing, art style, and color scheme—and maintains those attributes throughout the generated video sequence, leading to more coherent and professional results than text prompts alone.

Can Seedance 2.0 generate videos with sound?

Yes, a standout feature is its integrated audio generation. Seedance 2.0 can synthesize synchronized audio in a single pass, which includes adding background sound effects (SFX), a musical score, and even voiceover with accurate lip-sync for over 10 languages. This creates a more complete and immersive video experience directly from your initial input.

What are the technical specifications for the output video?

Videos generated by Seedance 2.0 are production-ready. The maximum output resolution is 1080p (Full HD), with a standard duration of 5-10 seconds per generation (extendable). Videos are rendered at a smooth 24 frames per second and can be exported in 16:9 (landscape), 9:16 (portrait), or 1:1 (square) aspect ratios. All final videos are delivered in the widely compatible MP4 (H.264) format.

Alternatives

JSON to Video Alternatives

JSON to Video is a specialized tool in the generative AI video space, allowing creators to build cinematic scenes using structured JSON data instead of ambiguous text prompts. This approach offers a unique blend of creative control and predictable output, setting it apart from more conventional video generation platforms. Users often explore alternatives for various reasons. Some may seek different pricing models or free tiers to experiment with. Others might need compatibility with specific platforms or workflows, or desire a different balance between ease of use and granular creative control. The search for the right tool is a natural part of finding the perfect fit for one's project needs and technical comfort. When evaluating other options, consider the core trade-off between flexibility and predictability. Look at how much direct control you have over visual elements like camera work and lighting versus the simplicity of a text-only interface. Also, assess the output quality, supported formats, and how well the tool integrates into your existing content creation pipeline. The goal is to find a solution that aligns with both your creative vision and practical requirements.

Seedance 2 AI Video Generator Alternatives

Seedance 2 AI Video Generator is a powerful tool in the AI video creation space, designed to transform text, images, and existing clips into cinematic-quality videos. It stands out for its promise of a fast, all-in-one workflow that maintains visual consistency and offers intuitive control, making it a compelling option for creators looking to streamline their production process. Even with its strengths, users often explore other options for a variety of reasons. Some might be seeking a different pricing model or subscription tier that better fits their budget. Others may need specific features, like different export formats, more granular control over animation, or compatibility with their existing software ecosystem. The quest for the right tool is deeply personal and depends on one's unique creative workflow and technical requirements. When evaluating alternatives, it's wise to consider what matters most for your projects. Look closely at the core AI capabilities, such as the quality of motion and how well it interprets prompts. The user interface and learning curve are also crucial, as a tool should feel empowering, not frustrating. Finally, consider practical aspects like output resolution, licensing terms for generated content, and the overall value for your investment.