Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

Hostim.dev

Discover simple EU Docker hosting with built-in databases for fast, secure deployments.

Last updated: March 1, 2026

OpenMark AI lets you benchmark over 100 LLMs on your specific tasks, providing instant insights into cost, speed, quality, and stability.

Last updated: March 26, 2026

Visual Comparison

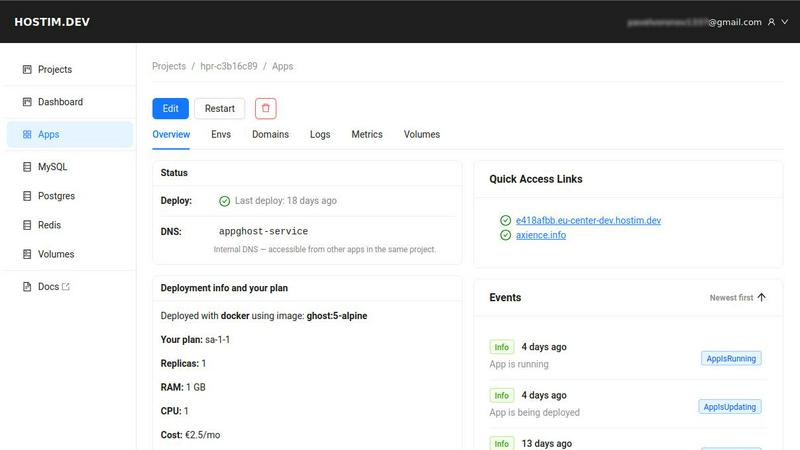

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Deployment Simplicity

Hostim.dev eliminates infrastructure complexity by accepting what you already have. You can deploy directly from a public or private Docker image, connect a Git repository for automatic updates, or simply paste an entire Docker Compose file to launch a multi-service application stack. This approach means you can go from zero to a live, accessible application in minutes, without ever needing to write Kubernetes manifests or configure cloud networking manually. It’s the fastest route to production for containerized workloads.

Built-in Managed Services

Imagine your database, cache, and file storage being provisioned and connected to your app automatically. Hostim.dev does exactly that, offering instantly available, managed instances of MySQL, PostgreSQL, and Redis. Persistent storage volumes are also just a click away. These services are pre-wired to your application with environment variables, so everything works together seamlessly from the first moment, removing a huge chunk of traditional setup work.

Secure & Isolated EU Hosting

Every project you create on Hostim.dev runs in its own securely isolated Kubernetes namespace on bare-metal servers located in Germany. This ensures performance and compliance by default, with all data subject to GDPR regulations. Each project automatically receives a free HTTPS certificate, and you get access to live logs and basic metrics, providing a secure and observable foundation for your applications right out of the box.

Transparent Per-Project Billing

Hostim.dev introduces clarity to cloud costs with a simple, flat pricing model that starts at just €2.50 per month. Crucially, costs are tracked per project, making it incredibly easy to understand expenses, budget for client work, or manage multiple applications. This per-project isolation extends to resources and billing, providing a clean and professional way to hand over projects or track costs for individual products or clients.

OpenMark AI

User-Friendly Task Configuration

OpenMark AI boasts a straightforward task configuration interface, allowing users to describe the tasks they want to benchmark in simple language. This eliminates the need for technical knowledge, making it accessible to all team members.

Comprehensive Model Comparison

The platform supports benchmarking against over 100 AI models, providing users with the ability to compare real-time results across a diverse range of tasks. This feature ensures that teams can find the best-performing model for their specific needs.

Real-Time Performance Metrics

Users can evaluate crucial performance metrics like cost per request and latency during benchmarking sessions. This data allows teams to understand the economic implications of their choices and helps in selecting models that deliver the best value.

Consistency Checks

OpenMark AI enables users to test the consistency of model outputs by running the same task multiple times. This feature is vital for teams that require reliable and repeatable results, ensuring that they can trust the models they choose.

Use Cases

Hostim.dev

Freelancer Project Delivery

For freelancers, speed and clean handover are key. Hostim.dev allows you to rapidly deploy a client's application from a Docker Compose file, have all databases ready instantly, and present a live prototype or final product in record time. The per-project billing model lets you invoice clients transparently for the exact infrastructure used, and you can hand over the project easily without any server management baggage.

Agency Client Work Management

Agencies can leverage Hostim.dev to maintain strict isolation between different client projects, each in its own secure environment. This prevents resource conflicts and enhances security. The clear cost breakdown per project simplifies internal accounting and client billing. Furthermore, the EU-based, GDPR-compliant hosting is a significant advantage for serving European clients with data residency requirements.

Startup & SaaS MVP Launch

Startups building a SaaS product or an MVP need to move fast without upfront DevOps investment. Hostim.dev provides a full, production-ready environment with databases, SSL, and scaling options from day one. The predictable pricing protects from unexpected bills, allowing teams to focus their energy on developing features and validating their business idea rather than on infrastructure puzzles.

Educational Projects & Prototypes

Students and developers learning modern backend development can use Hostim.dev to deploy real-world projects with real databases and a public URL. The free trial and student credits offer a risk-free way to experiment with Docker, different frameworks, and managed services, resulting in a tangible portfolio piece that demonstrates practical deployment skills beyond local development.

OpenMark AI

Model Selection for AI Features

Teams can use OpenMark AI to systematically evaluate different models to find the most suitable option for their intended AI features. This helps ensure that the chosen model aligns with both performance and cost expectations.

Cost Analysis for API Usage

By comparing the actual costs associated with different models, teams can make informed financial decisions about which APIs to use. This is particularly useful for budgeting and resource allocation in projects.

Quality Assurance in AI Outputs

OpenMark AI allows teams to assess the quality of outputs across various models, helping to ensure that the final product meets user expectations and project requirements. This is crucial for maintaining high standards in AI applications.

Benchmarking for Research and Development

OpenMark AI serves as a powerful tool for R&D teams looking to explore the capabilities of emerging models. By benchmarking new technologies, teams can stay ahead of the curve and innovate more effectively.

Overview

About Hostim.dev

What if the path from a brilliant idea to a live, fully-featured application could be as simple as a single command? Hostim.dev invites you to explore this very possibility, reimagining the deployment experience for the modern developer. It's a bare-metal Platform-as-a-Service (PaaS) that strips away the intimidating layers of cloud console navigation and complex infrastructure YAML, offering a direct launchpad for your containerized applications. At its heart, Hostim.dev is built for those who crave both simplicity and control, elegantly removing the heavy DevOps overhead without locking you into a proprietary ecosystem. You provide your application—whether it's a Docker image, a Git repository, or a complete Docker Compose file—and the platform takes care of the rest. It automatically provisions and seamlessly wires up essential managed services like MySQL, PostgreSQL, Redis, and persistent storage volumes. Every project is securely isolated, comes with automatic HTTPS, and runs on GDPR-compliant bare-metal servers in Germany. Designed for freelancers, startups, agencies, and SaaS builders, Hostim.dev offers a transparent, predictable journey from code to production, allowing you to focus on what you love most: building remarkable software, not managing servers.

About OpenMark AI

OpenMark AI is an innovative web application designed for the benchmarking of large language models (LLMs) at the task level. It empowers developers and product teams to conduct thorough assessments of various AI models by simply describing the tasks they wish to evaluate in plain language. With OpenMark AI, users can run identical prompts against a wide array of models in a single session, enabling direct comparisons across several critical metrics such as cost per request, latency, scored quality, and consistency across multiple runs. This capability allows teams to identify variance in outputs, ensuring they do not rely on a single fortunate response but rather on comprehensive data.

What sets OpenMark AI apart is its user-friendly interface and ease of use. There's no need for complex API configurations or coding; everything is handled within the platform. This makes it ideal for those who need to validate their model choices before deploying AI features. By using real API calls instead of cached data, OpenMark AI provides insights into the actual performance and cost-efficiency of models, guiding users toward informed decisions tailored to specific workflows. With free and paid plans available, OpenMark AI is accessible for teams worldwide looking to optimize their AI implementations.

Frequently Asked Questions

Hostim.dev FAQ

What does the free tier include?

Hostim.dev offers a 5-day free trial for a complete project, requiring no credit card to start. This trial includes all platform features: you can deploy your application, provision managed databases (which have their own free tiers for low usage), attach storage volumes, and benefit from automatic HTTPS and isolation. It's a full-featured experience designed to let you thoroughly test the platform with your real workload.

Can I deploy with just a Docker Compose file?

Absolutely. One of the core deployment methods is via a Docker Compose file. You can simply paste your existing docker-compose.yml into the Hostim.dev dashboard, and the platform will parse it and deploy all defined services. This is a powerful way to lift and shift existing multi-container local setups directly into a hosted, production-like environment without rewriting any configuration.

Where is my app hosted?

All applications on Hostim.dev are hosted on bare-metal servers in a German data center within the European Union. This ensures low-latency access for EU users and guarantees that all data storage and processing are GDPR compliant by default, providing a strong foundation for privacy and data protection regulations.

Do I need to know Kubernetes?

Not at all. Hostim.dev uses Kubernetes under the hood to provide robust isolation and orchestration, but this complexity is entirely abstracted away from you. You interact with a simple dashboard and deploy using Docker-native tools (Images, Git, Compose). You get the benefits of Kubernetes—like security, isolation, and reliability—without needing to learn or manage it directly.

OpenMark AI FAQ

What types of tasks can I benchmark with OpenMark AI?

OpenMark AI supports a wide variety of tasks, including but not limited to classification, translation, data extraction, research Q&A, and image analysis. This versatility allows users to test models across many applications.

Do I need to configure API keys to use OpenMark AI?

No, OpenMark AI simplifies the benchmarking process by eliminating the need for users to configure separate API keys for different models. The platform handles this automatically, allowing for a seamless experience.

How can I ensure the consistency of model outputs?

OpenMark AI allows users to run multiple iterations of the same task, enabling teams to evaluate the consistency of outputs. This feature is essential for applications where reliability and predictability are crucial.

Are there any costs associated with using OpenMark AI?

OpenMark AI offers both free and paid plans, with details available in the in-app billing section. This provides flexibility for teams of different sizes and budgets, ensuring that everyone can access powerful benchmarking tools.

Alternatives

Hostim.dev Alternatives

Hostim.dev is a specialized Platform-as-a-Service (PaaS) designed to simplify deploying containerized applications in the European Union. It automates the provisioning of databases, caches, and infrastructure, offering a fast, secure path from code to production on bare-metal servers. Developers often explore alternatives for various reasons. Some may seek different pricing models or specific geographic regions for data residency. Others might require advanced features, integrations, or a different balance between simplicity and granular control over their stack. When evaluating other platforms, consider your core needs. Key factors include deployment flexibility, the ease of integrating managed services, compliance requirements, and the overall developer experience. The goal is to find a solution that aligns with your technical requirements and workflow preferences, allowing you to focus on building your application.

OpenMark AI Alternatives

OpenMark AI is a web application designed for task-level benchmarking of large language models (LLMs). It enables users to evaluate over 100 models based on cost, speed, quality, and stability, all in a seamless browser-based environment. This platform is particularly valuable for developers and product teams who need to make informed decisions about which AI model to implement, ensuring that both performance and cost-effectiveness are considered. Users often seek alternatives to OpenMark AI due to various factors such as pricing structures, feature sets, and specific platform requirements. When exploring other options, it's important to consider aspects like ease of use, the breadth of model support, and how well the alternative addresses your unique benchmarking needs. A thorough understanding of these elements can help streamline your decision-making process in selecting the best tool for your AI projects.